The satire misses the mark since cars already have strict mandatory visibility requirements by law. In the EU, you must have working headlights, brake lights, turn signals, daytime running lights (since 2011), fog lights, reverse lights, and reflectors. Driving without any of these gets you fined, points on your license, and fails vehicle inspection (TÜV/MOT). These aren't optional safety suggestions like cyclist hi-viz - they're legal requirements with real penalties.

I don't know about yankee laws...

Cringe

2020 called, they want their self-pity memes back

Brave browser is the solution.

You must be some kind of black belt master in black and white thinking 🤡. Biden literally got workers their first ever paid sick days after decades of not being able to even call in sick, pressured companies to drop their draconian attendance policies, and you're here crying about him 'abandoning the working class'? Lmao get real

US rail companies grant paid sick days after public pressure in win for unions https://www.theguardian.com/business/2023/may/01/railroad-workers-union-win-sick-leave?CMP=share_btn_url

When Joe Biden and Congress enacted legislation in December that blocked a threatened freight rail strike, many workers angrily faulted Biden for not ensuring that the legislation also guaranteed paid sick days. But since then, union officials says, members of the Biden administration, including the transportation secretary, Pete Buttigieg, and labor secretary, Marty Walsh, who stepped down on 11 March, lobbied the railroads, telling them it was wrong not to grant paid sick days.

“We’ve made a lot of progress,” said Greg Regan, president of the Transportation Trades Department of the AFL-CIO, the main US labor federation. “This is being done the right way. Each railroad is negotiating with each of its individual unions on this.”

How did dems abandon the working class?

Biden has been the most pro working class president since FDR.

They are so good at advertising Linux that they have 73% of desktop market share while linux has less than 5% according to statcounter

🤯🤯

🥳🎉

Lol

Lol

Yes that is the point behind the 'you wouldn't download a car' meme 🙂

It's funny you mention the Katy Perry chord case, because Damien Riehl, who made the argument I referenced in my original post, actually talked about this exact case in the podcast I mentioned. He noted that Katy Perry was initially sued and a jury awarded $2.8 million over a very simple melody that appeared over 8,000 times in Riehl's dataset of generated melodies. However, after Riehl gave his TED talk about his "All the Music" project in early 2020, the judge reversed the jury verdict, saying the melody was unoriginal and therefore uncopyrightable.

Thank you

And they've downloaded and read millions of books without paying for them.

Do you have a source on that?

OpenAI like other AI companies keep their data sources confidential. But there are services and commercial databases for books that people understand are commonly used in the AI industry.

I am thrilled to see the output you get!

The Irony of 'You Wouldn't Download a Car' Making a Comeback in AI Debates

Those claiming AI training on copyrighted works is "theft" misunderstand key aspects of copyright law and AI technology. Copyright protects specific expressions of ideas, not the ideas themselves. When AI systems ingest copyrighted works, they're extracting general patterns and concepts - the "Bob Dylan-ness" or "Hemingway-ness" - not copying specific text or images.

This process is akin to how humans learn by reading widely and absorbing styles and techniques, rather than memorizing and reproducing exact passages. The AI discards the original text, keeping only abstract representations in "vector space". When generating new content, the AI isn't recreating copyrighted works, but producing new expressions inspired by the concepts it's learned.

This is fundamentally different from copying a book or song. It's more like the long-standing artistic tradition of being influenced by others' work. The law has always recognized that ideas themselves can't be owned - only particular expressions of them.

Moreover, there's precedent for this kind of use being considered "transformative" and thus fair use. The Google Books project, which scanned millions of books to create a searchable index, was ruled legal despite protests from authors and publishers. AI training is arguably even more transformative.

While it's understandable that creators feel uneasy about this new technology, labeling it "theft" is both legally and technically inaccurate. We may need new ways to support and compensate creators in the AI age, but that doesn't make the current use of copyrighted works for AI training illegal or unethical.

For those interested, this argument is nicely laid out by Damien Riehl in FLOSS Weekly episode 744. https://twit.tv/shows/floss-weekly/episodes/744

Time will prove you wrong

Those claiming AI training on copyrighted works is "theft" are misunderstanding key aspects of copyright law and AI technology. Copyright protects specific expressions of ideas, not the ideas themselves. When AI systems ingest copyrighted works, they're extracting general patterns and concepts - the "Bob Dylan-ness" or "Hemingway-ness" - not copying specific text or images.

This process is more akin to how humans learn by reading widely and absorbing styles and techniques, rather than memorizing and reproducing exact passages. The AI discards the original text, keeping only abstract representations in "vector space". When generating new content, the AI isn't recreating copyrighted works, but producing new expressions inspired by the concepts it's learned.

This is fundamentally different from copying a book or song. It's more like the long-standing artistic tradition of being influenced by others' work. The law has always recognized that ideas themselves can't be owned - only particular expressions of them.

Moreover, there's precedent for this kind of use being considered "transformative" and thus fair use. The Google Books project, which scanned millions of books to create a searchable index, was found to be legal despite protests from authors and publishers. AI training is arguably even more transformative.

While it's understandable that creators feel uneasy about this new technology, labeling it "theft" is both legally and technically inaccurate. We may need new ways to support and compensate creators in the AI age, but that doesn't make the current use of copyrighted works for AI training illegal or unethical.

The anti-AI sentiment in the free software communities is concerning.

Whenever AI is mentioned lots of people in the Linux space immediately react negatively. Creators like TheLinuxExperiment on YouTube always feel the need to add a disclaimer that "some people think AI is problematic" or something along those lines if an AI topic is discussed. I get that AI has many problems but at the same time the potential it has is immense, especially as an assistant on personal computers (just look at what "Apple Intelligence" seems to be capable of.) Gnome and other desktops need to start working on integrating FOSS AI models so that we don't become obsolete. Using an AI-less desktop may be akin to hand copying books after the printing press revolution. If you think of specific problems it is better to point them out and try think of solutions, not reject the technology as a whole.

TLDR: A lot of ludite sentiments around AI in Linux community.

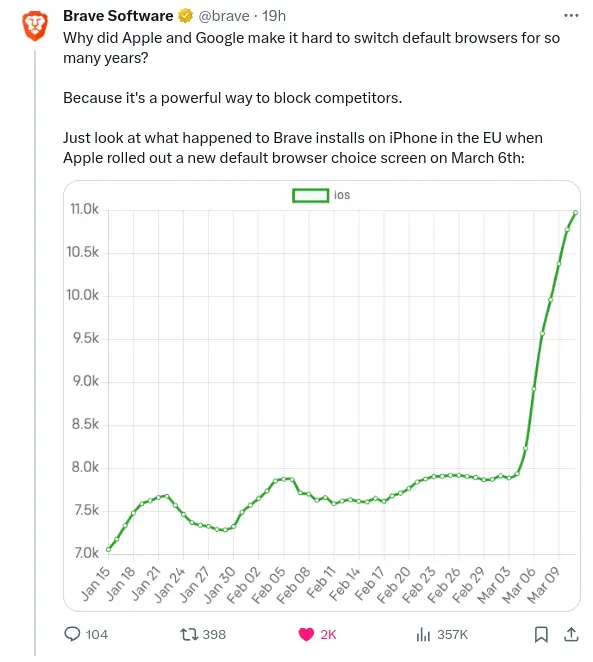

The DMA already having an impact. Brave Browser installs surge after introduction of browser choice splash screen on iOS.

https://www.macrumors.com/2024/03/13/brave-browser-rise-installs-ios-14-7-eu/

Brave just released their own privacy-preserving AI assistant named Leo.

You can test it in Brave Nightly 1.59.